Curious Conversations, a Research Podcast

"Curious Conversations" is a series of free-flowing conversations with Virginia Tech researchers that take place at the intersection of world-class research and everyday life.

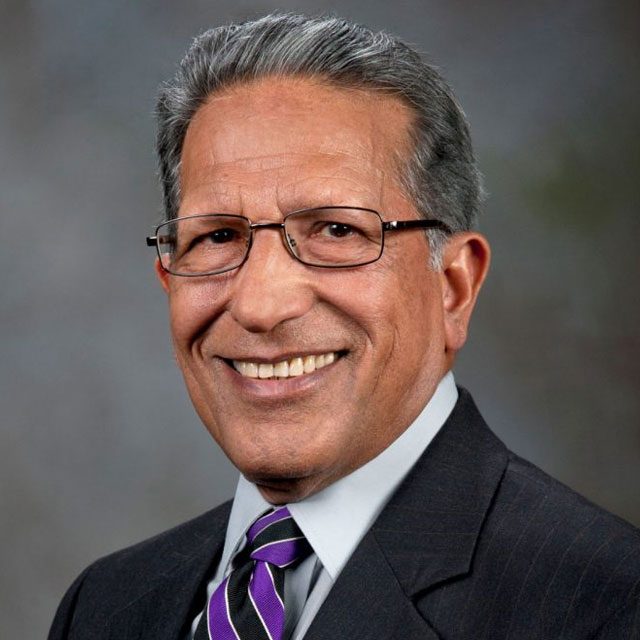

Produced and hosted by Travis Williams, assistant director of marketing and communications for the Office of Research and Innovation, episodes feature university researchers sharing their expertise, motivations, the practical applications of their work in a format that more closely resembles chats at a cookout than classroom lectures. New episodes are shared each Tuesday.

“Curious Conversations” is available on Spotify, Apple Podcasts, and YouTube.

If you know of an expert (or are that expert) who’d make for a great conversation, email Travis today.

Latest Episode

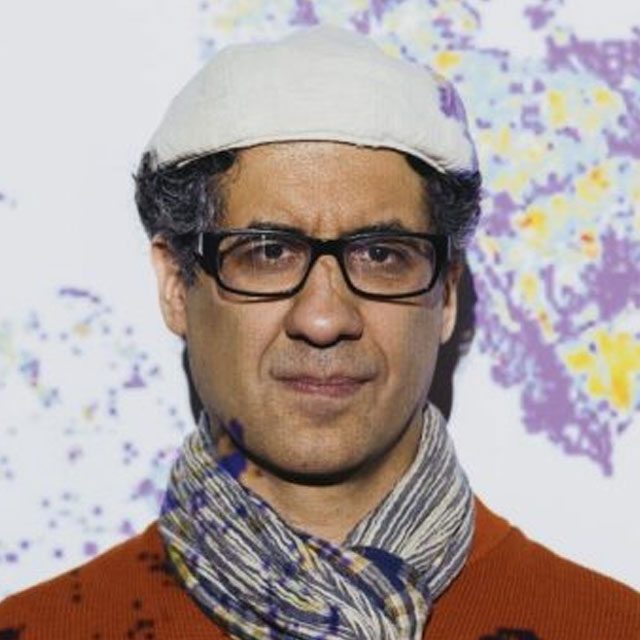

Ben Katz joined Virginia Tech’s “Curious Conversations” to talk about the impact of lifestyle choices on brain health and cognition. He shared insights about how cardiovascular exercise, spending time in nature, and maintaining a healthy diet can enhance cognitive function. He also talked about the importance of sleep and benefits of social interactions, as well as what changes he has made to best support his own cognition.

(music)

Travis

What do you think about how you think? What do you think can improve how you think? What do you think can improve your brain? Well, according to Virginia Tech's Ben Katz, spring is one of those things. In fact, he was recently a part of an article titled, Spring is Good for Your Brain. So naturally I wanted to know what it is about this season that makes it so magical for my cognition. And thankfully Ben was kind enough to join the podcast to answer that question and many more.

Ben is an Associate Professor of Adult Development and Aging within the Department of Human Development and Family Science at Virginia Tech. His research focuses on interventions to improve executive function capacities including non-invasive brain stimulation, cognitive training, and lifestyle change.

So Ben and I talked a little bit about spring and it turns out that it's actually less about the season itself and more about the choices that you and I are more likely to make during the season. Specifically we talked about cardiovascular exercise, spending time in nature, and how maintaining a healthy diet can actually. He shared with me some of what we do and don't know about those specific exercises and why they're actually good for our brain. And we also talked about the importance of sleep and the benefits of social interaction when it comes to our cognitive abilities. And being an expert in this subject, I was really curious to learn what he does to ensure that his brain functions as good as it possibly can. So I left this podcast with a little to-do list for myself and hopefully you will as well.

I'm Travis Williams and this is Virginia Tech's Curious Conversations.

(music)

Travis

I want to talk to you about habits and things that we can do to help with our brain cognition. And so I was thinking that maybe a good place to just start that conversation is to just simply kind of lay out what is, what is brain cognition? What are we talking about?

Ben

Sure, so cognition, the word cognition more generally really just refers to all the processes that are involved in thinking, right? So these are things like attention and memory and executive functions and processing speed aspects of that. All of those things go into cognition. So it's really an umbrella term that refers to all these things involved with our ability to just think from day to day and moment to moment.

Travis

Okay. Well, recently I know that you have been talking a lot about brain cognition related to the spring. People have been very interested in this, about springtime and how it is good for our brain health and our cognition. And I'm curious, is there anything magical about the spring when it comes to our cognition? And if there is, what is it?

Ben

Sure, yeah, get it, right? This is, I've been talking about that a little bit because yes, it is springtime right now, right, in Blacksburg, although it's felt more or less like that over the last couple of weeks. And so to me, what's exciting about spring is not necessarily what's one thing, but all the different things that come along with it, right? Because it can be a little bit complex. So for example, you've got warmer weather, right? More sunlight. And we can talk about each of those different things and what they do.

But what I get excited about with spring is that having that additional time and ability to want to spend time outside, right, really facilitates more time spent doing cardiovascular exercise, which we know is really good for our brains and for cognition, and also facilitates our ability to spend time in nature, which there's some really cool evidence that that's positive, as well as a whole host of other activities from social engagement to cognitive engagement, different things that seem to potentially be protective for your brain later in life or even just help to enhance cognition at any point.

Travis

Okay, so the season itself may be not inherently magical, but maybe what it allows us to take on. Maybe those things are, maybe that's where the real magic is.

Ben

Exactly, right? Because if people say, you know, there's certain things they like about spring, right? you have longer days. And actually, it's less long than summer, right? Longer than winter. But, you know, there's some evidence even that having more light, right? That that actually might make you sleep a little bit less long. And that's not always a good thing, although it means more mixed things. There's more mixed research around what that actually means for the quality of sleep. Or, you know, for example, the warmer weather, well, you know, warmer weather in and of itself. If it's really hot, that's actually not always so great for cognition. There's some evidence around that from people in warmer climates or really hot climates. But exactly, it's these other things that think it allows you to do that are really positive.

Travis

Yeah, I have a lot of anecdotal evidence about it being too warm in a room, me not being able to, you know, just to even stay awake.

Ben

There's actually a ton of research on that. If you've ever gone to a conference and they keep it really cold sometimes, there's good empirical reasons why it's super ice cold, right? That's not great. But if it's a little bit on the cooler side, there is some evidence that that actually can help with some things, maybe our ability to attend or to stay alert and things like that.

Travis

I will remind those folks that it's not my fault that I fell asleep there. That's not my fault. Science says not Travis's fault.

Ben

Yes, it's not your fault it's too hot.

Travis

Well, you mentioned exercise and specifically cardiovascular exercise. I'm curious, what is it about cardiovascular exercise? What is it doing to help my cognition?

Ben

You mentioned cardiovascular exercise, which is good because exercise can be all these different things, right? There's yoga and stretching, but also, yeah, cardiovascular aerobic exercise, things like running and biking and swimming or hiking, right? These are things that get our heart pumping, right? And the reason it's, it's, there's number of pathways through which those can impact cognition and the brain, especially, but really one of the most direct ones is just the fact that our cardiovascular health is so closely tied to our brain health. And it's not that surprising when you start to think about the fact that your brain is highly vascularized, you need blood to bring oxygen to your brain. And so the efficiency of your cardiovascular system is very, very closely tied to brain health. And so anything you can do to increase your cardiovascular health, right, that's going to probably over the long term help you to maintain your brain health as well.

Travis

Yeah, that makes a lot of sense to me, just the idea that there's a lot of blood up here. And so the better it's flowing, like the better it'll work. Like in very, very simple terms.

Ben

Yeah, we all kind of know that, but we don't always think about it, right? you know, having a stroke, for example, is very, very bad, right? And that's literally right, cutting off that blood flow to the parts of the brain that need it and all the nutrients and oxygen that that would provide. so the exactly we kind of, we kind of implicitly understand that our cardiovascular health is important, but we don't always think, I think about how important like diet and exercise are for the brain in addition to the body.

Travis

Yeah. Well, you mentioned being in nature as well. What do we know about nature? What happens when we spend time in nature to us?

Ben

Yeah, so there's a lot of there's a bunch of really interesting studies on this. And to some extent, right, there's still good work to be done. But there's some studies that suggested that, say, being in a quiet green space, right, so surrounded by trees, think of walk in the forest with bird calls, minimal distractions. This is really usually as opposed to being in a more distraction heavy urban setting or something like that. There's some evidence that being in nature can be sort of restorative to things like attention. And again, I mentioned, right, this is there's still some questions about exactly how and when this works, what the boundary conditions are for this. But there's even been some studies that suggested even looking at pictures of nature may be positive. But the way I look at it is that we know these settings are probably good for mood. We know these settings may have some restorative effects by removing you from other distraction heavy or things that could distract you or could interfere with your condition in those ways. And so to me, it's well, if you're out in the springtime, doing cardiovascular exercise in nature, right? That's probably one of the best situations you could be in, right?

Travis

Yeah, that, I mean, it definitely sounds like that that's kind of multiple layers of good things for you. My wife has this belief she shared with me many times. It may be steeped in research. It may not, I do not know, but she believes that when we go on hikes into the woods and the forest and we're in those types of environments, that it's really good for her brain. And it's specifically, she thinks it's good for our child's brain. Is that true? Do you want to debunk her? I'll tell her not to listen to the podcast if you want to. ⁓

Ben

No, wouldn't. I wouldn't debunk her. the only challenge with that is sort of how do you separate out? No, no. First of all, there's actually been some research on hiking even, I think, that's positive. And there's all this research showing it's good for mood. when it comes to like, you can memory or attention, especially a of research on attention, right? I think the only thing that's tricky is separating out. We know that there are both transient and long-term effects of exercise, right? And hiking can be very, very good cardiovascular aerobic exercise. You get your heart pumping, you're hiking uphill. Right? We're right in the middle of the, don't know if you ever go to the Appalachian Trail, there's a part right near Virginia Tech, something called the Caffer Oak that my wife and I go to all the time. There's so many areas to hike around here, or McCaffey Knob or something like that. And so I think the only challenge is, no, I think your wife is right on. I think the question is, to what extent is it the exercise versus to what extent is it to being surrounded by a natural environment, spending time off of your phone, right? There's all these different parts of it that may be contributing to that. And I guess my argument would be, If it's something that makes you feel good and it's healthy for you overall, then we'll, as researchers, eventually figure out what parts of that are maybe most important. But definitely, the evidence suggests that it's something you can do. If it's safe and healthy for you to hike, then it's probably a really good activity for your cognitive health as well.

Travis

Yeah, just keep doing it. We'll figure out why it works later. Just keep doing it in the meantime is what it sounds like to me. It does.

Ben

I mean, why it works matters, right? But like, to me, it's like probably the cardiovascular exercise is maybe the first thing, but then some of the other aspects of being in quiet setting, having fewer distractions around you, right? There's a little bit of evidence that that kind of thing can be restorative too.

Travis

Yeah, well, I'm gonna cut the clip where you said that my wife was right and we'll just use, we'll use that. I'm gonna use that for multiple things, Well, you mentioned, I think, diet as well. And so I'm curious, what do we know about diet and the foods that we eat and how they interact with us when it comes to cognition?

Ben

Yeah, that's early. So a lot of the research we're doing in the lab right now is focused on that with my colleague, Brenda Davie and Alex DeFless Antonio up at the FBRI up in the Roanoke campus. And so we've been really interested in how what we call dietary patterns are associated with cognition. So I don't know if you've probably heard of like a Mediterranean pattern diet, right? So these are the diets that were adopted by folks around the Mediterranean. So, right, basically lean proteins and fish, whole grains, fruits and vegetables, right? And more recently in our lab, we've also been focused on diet called the DASH diet. And that's a diet that's focused on stopping hypertension. That's what the H stands for. And it's about really low in sodium. And we found that people that have a Mediterranean diet or even a DASH diet that actually adhere to those types of diets are more likely to have less cognitive decline when they're older. you rather rate, all experienced some cognitive decline, right? We get little less sharp as we age. But the folks that really adhere to the DASH diet had less of that.

And so we've been trying to really figure out more recently is well as dietary patterns, right, include lots of different things. And we think the pattern itself is more important than any individual item. But we've been trying to explore which food items may be most problematic. And so a second investigation we did was really looking at ultra processed foods. And we were able to figure because people know, right, there's all this conversation around ultra processed foods. guess we're thinking about springtime, right? Picnics, we might have those types of things outside. And what we found was kind of surprising because people are like, they're bad and they may be for other aspects of health, but for the brain, specifically for your risk of developing dementia, it looks like actually only two of them were really problematic, and those are processed animal products. So these think about like your off-the-shelf deli meat or something like that, and sugar sweetened beverages. So that's really sodas most of the time. And what we think as far as why that is the case, and then like the actual increase was pretty significant. We're talking about, you know, For the deli meats, was like something like 20 % or something like that for each serving. And this is on average, right? This is not like you eat a single serving of a deli meat one day, you're gonna have a 20 % higher risk. This is if you're doing this every day, right? But we think that is basically probably the sodium and maybe nitrates and some other things that are in the deli meats and then probably the sugar in the sugar sweetened beverages. And so what that tells me is alter processing or food processing isn't by itself necessarily bad, right? But when we talk about brain health, it might be specific things that come along with that processing that are really problematic. And so yeah, I've cut out sort of from my diet.

Travis

So like that maybe it's not just having one hot dog on the 4th of July that you're talking about, but it's these patterns that are maybe the biggest takeaway. And also, there a connection there between some of those things and heart health as well? Like, are we kind of at the same thing here?

Ben

Yes. So this again, this is true for exercise, it's true for diet. The things that have the greatest influence on brain health and cognition, we're probably talking about multiple pathways, right? So for example, with exercise, yes, you improve cardiovascular health, but there's also all these other things that it might be involved with from, from like BDNF, which is brain derived neurotrophic factor, which is basically something that's supportive of learning, right? That you actually see more of that BDNF in your brain when you, you know, for people that exercise more or after exercise. For diet, it's probably both cardiovascular health, but it might also be other aspects of metabolic health related to blood sugar and things like that, as well as maybe specific things, right? You see a lot of people talking about like, okay, well, if I'm having olive oil with omega-3s or something like that. And my argument is still, it's good to know about individual food items, but the pattern probably matters more. And I keep coming back to this though, cardiovascular health though, these other things may matter a little bit, but it's probably the cardiovascular health is a significant contributor to that above a lot of other things. So yeah, it's really the fact that that's related to your heart health. That's a big, big, big piece of the puzzle.

Travis

And yeah, well, I think of the things that you have mentioned and we've kind of talked about, one of the things I don't think that you have mentioned in any of your answers so far that I am curious about is this aspect of social wellbeing and social interaction. What role does that play in our brain health?

Ben

Yeah. So again, right, it's multiple pathways. We probably know spending time socially. So when we say social interaction, what do you mean by playing a team sport, right? Playing board games with friends, volunteering in a group or something like that. These are all things where you're doing you may be getting exercise, right? You may be having opportunities for cognitive engagement. We can talk about that, too. But also, yes, just the fact that you're doing there's some evidence that social interaction right through those types of things right, can be really positive. Now we're asking what does it do, right? Well, it probably has improved, we know that maybe improved emotional health and more effective things, right, with an A, but there's some really interesting research with older adults that suggests that people that are more socially engaged, right, that they also have better cognitive function into late life. And, you know, the reasons why I think, again, are probably kind of complex. Some of it may be, you know, the boost to effective health that may then contribute to other things you're doing or the fact that you may be doing other activities that are cognitively engaging or that are good for your health at the same time. But in general, you and also you to be careful because some of that research is correlational or cross-sectional where we don't know whether people who have better cognition are more likely to be social. But there's been a lot of interventions, multimodal interventions to improve cognition in late life that really have started to incorporate a social component because at the least, right, if you're doing something with somebody else or you're part of a team, you may be more likely to maintain the intervention or to keep at it keep trying even when it gets hard.

Travis

Yeah, well, I mean, I guess what I'm thinking right now is that, like you mentioned before, we should all be doing some sort of cardiovascular exercise in nature, probably with friends, and then not drinking sugary sodas afterwards.

Ben

Yeah, that would be my suggestion, right? it's, you know, exactly, maybe cutting out the, it's, deli meat's hard, right? Because again, you just mentioned it's not like having one once for the 4th of July is bad, having a hot dog or something like that. And that's certainly not the case. It's really, you know, like what I've done with that is switching to, you know, buying or making a roast chicken at the beginning of the week and using that in stuff too. But yeah, exactly. It's all these different things together. And that's why there's been a big focus on multimodal interventions in our labs. trying to do that, right? Not just exercise but exercise and diet, or not just diet, but diet and cognitive engagement or something like that. How can you combine multiple interventions together? Because there's really the story is there's not one silver bullet, there's not a royal road to being able to maintain cognitive function.

Travis

Yeah, yeah. Well, I'm glad you mentioned the rotisserie chicken because I think that that's just a great life hack for anybody. I even if you're not worried about any of the other health stuff, like if you've listened this far, like that's an excellent life hack anyway.

Ben

Yeah, yeah. Well, and it can be as cheap as or, you know, it's a little tricky to plan it and buy it. And yes, if you buy a rotisserie chicken from the store, it may have more processing than if you make your own chicken, etc, etc. Right. But in general, my guess would be that that is going to be especially off the shelf deli meats. That's something where probably has a lot of sodium in it. Right. If you have more control over how much sodium is going into what you're making, that's probably going to be better. That's more consistent with a anti-hypertensive diet. Right.

Travis

Is there any other area that we haven't really talked about that is just really good for cognition that maybe I'm just overlooking?

Ben

There's one, yeah, there's sleep, which is critical, right? Not only for literally, it's when a lot of memory consolidation takes place, but people's sleep patterns, both time and quality, right? It's something that's really important. You know, I mentioned, we talked about maybe your sleep time actually gets a little bit lower, but maybe as long as your quality is consistent, actually exercise can improve your sleep quality. But yeah, sleep is probably up there. I would say, you know, top three lifestyle behaviors, maybe top four, along with actually not smoking is probably the other one. Yeah.

Travis

Yeah, how much, I mean, this is the question anybody's gonna ask you is how much do I need to sleep?

Ben

And so that's a fun question because it actually changes based on both your lifestyle, but also your age, right? You might find that you're sleeping a little bit less as you age, you know, so it's two things. It's the time spent sleeping and as far as we can tell, right, the average for people, know, six to eight, and that's really a window where too little and too much, both are kind of correlated with less optimal outcomes. But there's also don't forget quality, which is really like uninterrupted sleep that gets harder as we get older for a number of reasons.

but it's also very, very important, know, being able to sleep through the night, not taking as long to get to sleep, right? And those are things where actually like exercise, people that exercise regularly have better sleep quality. Sleep hygiene is really important. So you might've heard of like, right, how often are we doom scrolling or on our phones late into the evening, putting your phone away, leaving it another room. That's something that can be really positive for that. And overall, that's also actually associated with cardiovascular health sleep. But it is also been very, very closely tied to cognitive performance and cognitive health.

Travis

Well, you mentioned a couple of things as we've been talking that you have done. I'm curious, is there any other change or shift that you've made as you've gotten into this research just to help keep yourself healthy?

Ben

Yeah, that's a great question. What have I done and what do I recommend, right? When I'm talking to other people about it, I think one of the biggest ones for me is, is, and this is, you know, being, trying to be more conscious about distractions in my everyday life and things that can interfere with my attention, right? And this isn't necessarily, you know, there's maybe some evidence that over for the longterm, those things can be problematic, but, know, you think about this from a day to day moment, right? Your ability to sustain your attention on task, to be able to, to focus on the thing you want to focus on. How many things can interrupt that? So I've been more active in trying to find ways to reduce the number of notifications I get. I actually own a phone that has a, I don't think there's a lot of these, but it has a switch on it, and I can actually turn off notifications completely with a switch. So it makes it a lot easier to, there's actually even other phones that even have less notifications. They make phones that are just made for people that want to avoid that. But I've really tried to kind of curate the amount of distractions I have. And of course, right, Professor? I have students that want to come talk to me. I have office hours. have lots of fun and exciting things happening every day that I actually want to do. And so it's kind of a balance, right, in figuring out, what can I kind of, how can I remove some of these distractions? We talked about being in nature, right? Well, I'm right near the Han Garden. And so I will very frequently, if I notice myself feeling a little bit of increased cognitive load or burden or fatigue, I'll go for a walk because it's close to me. It's a quick, quick time to get out there. And I find that it's, at least personally, I find that restorative. it's probably, there's very little evidence that getting up and walking around is a bad thing almost ever. If you've been seated for a long time, if you're feeling tired for things like attention or health more generally.

Travis

Yeah, that sounds like another great life hack. Well, both of those try to limit notifications and when you can take a little walk in a garden,

Ben

there's evidence that even just a quick walk around the building. Yes, going and getting 30 minutes of strenuous exercise against your heart pumping is the best thing. But even just getting up and walking around outside briefly, get a little bit of that movement. There's some evidence that can be quite positive. There's all this research on like taking breaks from periods of work and studying. It seems like the brakes can be restored ever helpful. And, you know, that's the sort of thing where if you like being outside, it makes you happier. There's probably no reason not to do that.

(music)

Travis

And thanks to Ben for helping us better understand the types of things we can do to best take care of our brains and our own cognition. If you or someone you know would make for a great curious conversation, email me at traviskw at vt.edu. I'm Travis Williams and this has been Virginia Tech's Curious Conversations.

(music)

About Katz

Ben Katz is associate professor of adult development and aging within the Department of Human Development and Family Science at Virginia Tech. His research focuses on interventions to improve executive function capacities, including non-invasive brain stimulation, cognitive training, and lifestyle change.

Past Episodes

-

General Item

Lifestyle Choices and Brain Health with Ben Katz

Lifestyle Choices and Brain Health with Ben KatzVirginia Tech’s “Curious Conversations” podcast explores how lifestyle choices influence brain health, memory, and cognitive function with Ben Katz.

Date: May 11, 2026 - -

General Item

Cycling Safety and Infrastructure with Laura Hamm

Cycling Safety and Infrastructure with Laura HammVirginia Tech’s “Curious Conversations” podcast explores cycling safety, infrastructure, and research on bicycle and vehicle interactions with Laura Hamm.

Date: May 04, 2026 - -

General Item

Fertilizer and the Iran War with Rory Maguire

Fertilizer and the Iran War with Rory MaguireIn this episode, expert Rory Maguire explores how fertilizer production connects to global conflict, including impacts of the Iran war on agriculture.

Date: Apr 27, 2026 - -

General Item

The Connection Between Renting and Education with Tom Mayock

The Connection Between Renting and Education with Tom MayockIn this episode, Tom Mayock explores the connection between renting and education, including housing access, affordability, and student outcomes.

Date: Apr 20, 2026 - -

General Item

Food Facts with Melissa Wright

Food Facts with Melissa WrightMellisa Wright explores food safety, common misconceptions, and practical tips for handling and preparing food in this episode of “Curious Conversations”.

Date: Apr 13, 2026 - -

General Item

Fungi and Cloud Seeding with Boris Vinatzer

Fungi and Cloud Seeding with Boris VinatzerIn this epsisode, expert Boris Vinatzer explores how fungi influence cloud formation and weather through ice nucleation.

Date: Apr 06, 2026 - -

General Item

Scams and Cybercrime with Katalin Parti

Scams and Cybercrime with Katalin PartiThis episode explores scams and cybercrime, including common tactics and prevention strategies, with expert Katalin Parti.

Date: Mar 30, 2026 - -

General Item

The Chesapeake Bay's Blue Catfish Problem with Michael Schwarz

The Chesapeake Bay's Blue Catfish Problem with Michael SchwarzThis podcast episode explores the Chesapeake Bay’s invasive blue catfish problem, its ecological impacts, and potential solutions with Michael Schwarz.

Date: Mar 23, 2026 - -

General Item

Airborn Pathogen Detection and Mitigation with Linsey Marr

Airborn Pathogen Detection and Mitigation with Linsey MarrVirginia Tech’s “Curious Conversations” podcast explores airborne pathogen detection and mitigation, with expert Linsey Marr.

Date: Mar 16, 2026 - -

General Item

A New Way to Treat Kidney Stones with Adam Maxwell

A New Way to Treat Kidney Stones with Adam MaxwellVirginia Tech’s “Curious Conversations” podcast explores a new way to treat kidney stones using sound waves, with biomedical engineer Adam Maxwell.

Date: Mar 09, 2026 - -

General Item

Antarctic Bottom Water with Brian Romans

Antarctic Bottom Water with Brian RomansIn this episode, Brian Romans discusses Antarctic Bottom Water: how it forms, its role in global ocean circulation, and its climate significance.

Date: Mar 02, 2026 - -

General Item

The Mysteries of Microplastics with Austin Gray

The Mysteries of Microplastics with Austin GrayVirginia Tech’s “Curious Conversations” podcast explores the science of microplastics, their environmental and health impacts, with expert Austin Gray.

Date: Feb 23, 2026 - -

General Item

The Unknowns of Sharks with Francesco Ferretti

The Unknowns of Sharks with Francesco Ferretti"Curious Conversations" podcast episode explores global knowledge gaps in shark ecology and conservation with expert Francesco Ferretti.

Date: Feb 16, 2026 - -

General Item

Data Centers and Water with Landon Marston

Data Centers and Water with Landon MarstonLandon Marston discusses data centers and water use while exploring environmental impacts, cooling demands, and sustainable resource challenges.

Date: Feb 09, 2026 - -

General Item

Detecting Dark Matter with Patrick Huber

Detecting Dark Matter with Patrick HuberPatrick Huber joined Virginia Tech’s “Curious Conversations” to talk about world of neutrino physics and its implications for understanding dark matter.

Date: Feb 02, 2026 - -

General Item

Smart Mobility and the Future of Transportation with Mike Mollenhauer

Smart Mobility and the Future of Transportation with Mike MollenhauerMike Mollenhauer joined Virginia Tech’s “Curious Conversations” to talk about how smart mobility and infrastructure are influencing the future of transportation.

Date: Jan 26, 2026 -

-

General Item

The History of Christmas Music with Ariana Wyatt

The History of Christmas Music with Ariana WyattIn this Curious Conversations episode Ariana Wyatt delves into the history of Christmas music, from early carols to modern holiday hits.

Date: Dec 08, 2025 - -

General Item

3D Printing Homes with Andrew McCoy

3D Printing Homes with Andrew McCoyAndrew McCoy discusses how 3D-printed concrete homes could address housing scarcity and improve affordability in this Curious Conversations episode.

Date: Dec 01, 2025 - -

General Item

Banjo History with Patrick Salmons

Banjo History with Patrick SalmonsTune into Virginia Tech’s ‘Curious Conversations’ podcast - listen to Patrick Salmons explore the banjo’s origins, cultural history and evolving meaning.

Date: Nov 24, 2025 - -

General Item

Knee Injuries and Recovery with Robin Queen

Knee Injuries and Recovery with Robin QueenRobin Queen discusses ACL injuries, knee mechanics, and prevention and recovery strategies for athletes in this “Curious Conversations” podcast episode.

Date: Nov 17, 2025 - -

General Item

Black Bears and Observing Wildlife with Marcella Kelly

Black Bears and Observing Wildlife with Marcella KellyMarcella Kelly explores black bear behavior and wildlife observation techniques in a podcast episode about ecology and field research.

Date: Nov 10, 2025 - -

General Item

The History of Bed Bugs with Lindsay Miles

The History of Bed Bugs with Lindsay MilesIn this podcast episode, Lindsay Miles explores the genomics and urban evolution of bed bugs, uncovering what their history reveals about humans and pests.

Date: Nov 03, 2025 - -

General Item

The Cultural Significance of Ghosts with Shaily Patel

The Cultural Significance of Ghosts with Shaily PatelShaily Patel explores how ghost stories serve as cultural metaphors for trauma, memory and belonging in this podcast episode.

Date: Oct 27, 2025 - -

General Item

Adolescent Suicide, Screens, and Sleep with Abhishek Reddy

Adolescent Suicide, Screens, and Sleep with Abhishek ReddyAbhishek Reddy discusses how screen use, sleep patterns, and medication access relate to adolescent suicide risk and what families can do.

Date: Oct 20, 2025 - -

General Item

Drug Discovery and Weight Loss with Webster Santos

Drug Discovery and Weight Loss with Webster SantosWebster Santos discusses insights into drug discovery and weight-loss therapies, exploring scientific advances and health implications.

Date: Oct 13, 2025 - -

General Item

Exploring the Mind-Body Connection with Julia Basso

Exploring the Mind-Body Connection with Julia BassoIn this episode, Julia Basso explains how dance and movement practices link body and brain, exploring their effects on mood, health, and social connection.

Date: Oct 06, 2025 - -

General Item

Controlled Environment Agriculture with Mike Evans

Controlled Environment Agriculture with Mike EvansVirginia Tech’s Michael "Mike" Evans discusses innovations in controlled environment agriculture and their role in advancing sustainable food production.

Date: Sep 29, 2025 - -

General Item

Ecosystem Forecasting with Cayelan Carey

Ecosystem Forecasting with Cayelan CareyCayelan Carey explains how ecosystem forecasting helps predict water quality in lakes and reservoirs using sensor data and modeling tools.

Date: Sep 22, 2025 - -

General Item

Building Better with Bamboo with Jonas Hauptman

Building Better with Bamboo with Jonas HauptmanJonas Hauptman discusses his research into bamboo as a sustainable building material, exploring its challenges, non-traditional use, and potential for addressing housing needs.

Date: Sep 15, 2025 - -

General Item

The Future of 3D Printing with Chris Williams

The Future of 3D Printing with Chris WilliamsChris Williams explains how 3D printing differs from traditional methods, explores various materials, and discusses future applications.

Date: Sep 08, 2025 - -

General Item

Bacteriophages' Role in the Gut with Bryan Hsu

Bacteriophages' Role in the Gut with Bryan HsuBryan Hsu discusses bacteriophages, their role in gut health, and their potential in addressing antibiotic resistance through phage therapy.

Date: May 12, 2025 - -

General Item

Make Sense of Economic Climates with David Bieri

Make Sense of Economic Climates with David BieriDavid Bieri discusses the human side of economics, the value of historical context, and the importance of rethinking economic ideas and institutions.

Date: May 05, 2025 - -

General Item

The Magic of 'The Magic School Bus' with Matt Wisnioski and Michael Meindl

The Magic of 'The Magic School Bus' with Matt Wisnioski and Michael MeindlMatt Wisnioski and Michael Meindl explore how “The Magic School Bus” shaped science, education, and entertainment.

Date: Apr 28, 2025 - -

General Item

Using Virtual Reality to Explore History with Eiman Elgewely

Using Virtual Reality to Explore History with Eiman ElgewelyEiman Elgewely joined Virginia Tech’s “Curious Conversations” to talk about her work using virtual reality and the principles of interior design to explore historical spaces.

Date: Apr 21, 2025 - -

General Item

Ultra-Processed Foods with Alex DiFeliceantonio

Ultra-Processed Foods with Alex DiFeliceantonioAlex DiFeliceantonio discusses ultra-processed foods, their health impacts, and how dopamine influences food choices in Virginia Tech’s “Curious Conversations.

Date: Apr 14, 2025 - -

General Item

Technology’s Impact on the Appalachian Trail with Shalini Misra

Technology’s Impact on the Appalachian Trail with Shalini MisraShalini Misra explores how digital technologies are changing the Appalachian Trail, balancing tradition, accessibility, and environmental preservation.

Date: Apr 07, 2025 - -

General Item

The Dangers of Gaze Data with Brendan David-John

The Dangers of Gaze Data with Brendan David-JohnBrendan David-John discusses the use of gaze data in virtual and augmented reality, including privacy concerns and current mitigation research.

Date: Mar 31, 2025 - -

General Item

Community Dynamics During and After Disasters with Liesel Ritchie

Community Dynamics During and After Disasters with Liesel RitchieLiesel Ritchie discusses how sociology helps explain community resilience in disasters, the role of social capital, and the importance of local relationships.

Date: Mar 24, 2025 - -

General Item

Drone Regulation, Detection, and Mitigation with Tombo Jones

Drone Regulation, Detection, and Mitigation with Tombo JonesTombo Jones discusses drone regulations, safety, and counter UAS strategies, highlighting Virginia Tech’s role in advancing uncrewed aircraft systems.

Date: Mar 17, 2025 - -

General Item

Public Perception of Affordable Housing with Dustin Reed

Public Perception of Affordable Housing with Dustin ReedDustin Read discusses public perceptions of affordable housing, the role of profit status, and how development size impacts community support.

Date: Mar 10, 2025 - -

General Item

Unpacking the Complexities of Packaging with Laszlo Horvath

Unpacking the Complexities of Packaging with Laszlo HorvathLaszlo Horvath discusses packaging design complexities, including affordability, sustainability, and the impact of tariffs and supply chain disruptions.

Date: Mar 03, 2025 - -

General Item

Engineering Safer Airspace with Ella Atkins

Engineering Safer Airspace with Ella AtkinsElla Atkins discusses air travel safety, VFR vs. IFR challenges, recent collisions, and how technology and automation can enhance aviation safety.

Date: Feb 24, 2025 - -

General Item

Cancer-Fighting Bubbles with Eli Vlaisavljevich

Cancer-Fighting Bubbles with Eli VlaisavljevichEli Vlaisavljevich discusses histotripsy, an ultrasound therapy for cancer, its mechanics, clinical applications, and future directions in treatment.

Date: Feb 17, 2025 - -

General Item

Examining the ‘5 Love Languages’ with Louis Hickman

Examining the ‘5 Love Languages’ with Louis HickmanLouis Hickman discusses ‘The 5 Love Languages,’ their impact on relationships, research findings, and the role of personality, self-care, and adaptability.

Date: Feb 10, 2025 - -

General Item

The Behavior and Prevention of Wildfires with Adam Coates

The Behavior and Prevention of Wildfires with Adam CoatesAdam Coates explores the factors behind California wildfires, fire behavior science, urban challenges, and the role of prescribed burning in prevention.

Date: Feb 03, 2025 - -

General Item

Computer Security in the New Year with Matthew Hicks

Computer Security in the New Year with Matthew HicksMatthew Hicks discusses evolving computer security threats, AI-driven risks, and practical tips to stay secure in 2025.

Date: Jan 27, 2025 -

-

General Item

Internet of Things Safety and Gift Giving Tips with Christine Julien

Internet of Things Safety and Gift Giving Tips with Christine JulienChristine Julien discusses the Internet of Things, its definition, potential vulnerabilities, and the implications of using smart devices.

Date: Dec 09, 2024 - -

General Item

Neurodiversity and the Holidays with Lavinia Uscatescu and Hunter Tufarelli

Neurodiversity and the Holidays with Lavinia Uscatescu and Hunter TufarelliIn this episode the guests discuss neurodiversity during the holidays, exploring how traditions and social expectations affect differently wired minds.

Date: Dec 02, 2024 - -

General Item

AI and Better Classroom Discussions with Yan Chen

AI and Better Classroom Discussions with Yan ChenYan Chen discusses how AI can improve peer instruction and classroom discussions, using tools to help instructors monitor and support student engagement.

Date: Nov 25, 2024 - -

General Item

Forest Health and Natural Disasters with Carrie Fearer

Forest Health and Natural Disasters with Carrie FearerCarrie Fearer joins “Curious Conversations” to discuss forest health after natural disasters and ways to restore ecosystems.

Date: Nov 18, 2024 - -

General Item

Subduction Zones, Earthquakes, and Tsunamis with Tina Dura

Subduction Zones, Earthquakes, and Tsunamis with Tina DuraTina Dura talks about subduction zones, particularly the Cascadia Subduction Zone, earthquakes and tsunamis.

Date: Nov 11, 2024 - -

General Item

Turning Old Plastic into Soap with Guoliang “Greg” Liu

Turning Old Plastic into Soap with Guoliang “Greg” LiuIn this episode, Guoliang “Greg” Liu talks about his journey in sustainability, focusing on the innovative process of converting plastic waste into soap.

Date: Nov 04, 2024 - -

General Item

Emerging Technologies and Entrepreneurship with James Harder

Emerging Technologies and Entrepreneurship with James HarderJames Harder explores how emerging technologies fuel entrepreneurship and shape the future of startups in this Curious Conversations episode.

Date: Oct 28, 2024 - -

General Item

AI and Emergency Management with Shalini Misra

AI and Emergency Management with Shalini MisraIn this episode, Shalini Misra examines how artificial intelligence could support emergency management - from disaster planning to ethical use concerns.

Date: Oct 21, 2024 - -

General Item

Female Leaders of Nations and the U.S. Presidency with Farida Jalalzai

Female Leaders of Nations and the U.S. Presidency with Farida JalalzaiFarida Jalalzai explores female leadership worldwide and why no woman has yet held the U.S. presidency in this Curious Conversations podcast episode.

Date: Oct 14, 2024 - -

General Item

AI and Securing Water Systems with Feras Batarseh

AI and Securing Water Systems with Feras BatarsehIn this episode, Feras Batarseh discusses the intersection of water systems and technology, specifically focusing on aspects of artificial intelligence.

Date: Oct 07, 2024 - -

General Item

Alcohol Use and Intimate Partner Violence with Meagan Brem

Alcohol Use and Intimate Partner Violence with Meagan BremIn this episode, Meagan Brem talks about the intersection of alcohol use and intimate partner violence and the causal relationship between the two.

Date: Sep 30, 2024 - -

General Item

Brain Chemistry and Neuroeconomics with Read Montague

Brain Chemistry and Neuroeconomics with Read MontagueRead Montague explores how dopamine and serotonin shape decision-making, memory, mood, and motivation - the intersection of brain chemistry and neuroeconomics.

Date: Sep 23, 2024 - -

General Item

The Future of Wireless Networks with Lingjia Liu

The Future of Wireless Networks with Lingjia LiuLingjia Liu joins the "Curious Conversations" podcast to talk about the future of wireless networks.

Date: Sep 16, 2024 - -

General Item

The Mung Bean and Reducing Hunger in Senegal with Ozzie Abaye

The Mung Bean and Reducing Hunger in Senegal with Ozzie AbayeIn this episode, Ozzie Abaye notes her work using the mung bean to diversify the cropping system, empower farmers, and reduce hunger in Senegal, Africa.

Date: Sep 10, 2024 - -

General Item

Curbing the Threat of Invasive Species with Jacob Barney

Curbing the Threat of Invasive Species with Jacob BarneyIn this episode, Jacob Barney talks about invasive species, their impact on native species, and the challenges of managing them.

Date: Sep 02, 2024 - -

General Item

Making Motorcycle Riding Safer Around the Globe with Richard Hanowski

Making Motorcycle Riding Safer Around the Globe with Richard HanowskiIn this episode, Richard Hanowski talks about harnessing research to help make motorcycle riding safer in low- and middle-income countries.

Date: Aug 27, 2024 - -

General Item

The Evolution of Political Polling with Karen Hult

The Evolution of Political Polling with Karen HultIn this episode, Karen Hult discusses the history and evolution of polling, modern polling methods, and how to interpret poll results.

Date: Aug 20, 2024 - -

General Item

Navigating Back-to-School Emotions with Rosanna Breaux

Navigating Back-to-School Emotions with Rosanna BreauxIn this episode Rosanna Breaux discusses back-to-school emotions and strategies for students, parents, and educators.

Date: Aug 05, 2024 - -

General Item

Geologic Carbon Sequestration with Ryan Pollyea

Geologic Carbon Sequestration with Ryan PollyeaRyan Pollyea discusses geologic carbon sequestration, how it stores CO₂ underground and its role in climate change in this Curious Conversations episode.

Date: Jun 04, 2024 - -

General Item

Veterans and Mass Incarceration with Jason Higgins

Veterans and Mass Incarceration with Jason HigginsJason Higgins joins the "Curious Conversations" podcast to highlight the intersection of United States military veterans and mass incarceration.

Date: May 28, 2024 - -

General Item

Microplastics, the Ocean, and the Atmosphere with Hosein Foroutan

Microplastics, the Ocean, and the Atmosphere with Hosein ForoutanIn this episode Hosein Foroutan explores microplastics in the ocean and atmosphere - their sources, impacts, and what science can do about them.

Date: May 21, 2024 - -

General Item

Real Estate Values and Elections with Sherwood Clements

Real Estate Values and Elections with Sherwood ClementsClements examines how changes in home values may influence voter behavior - exploring the connection between real estate trends and presidential elections.

Date: May 14, 2024 - -

General Item

AI and the Hiring Process with Louis Hickman

AI and the Hiring Process with Louis HickmanIn this episode Louis Hickman discusses how artificial intelligence could influence hiring — from screening and bias to improving recruitment outcomes.

Date: May 06, 2024 - -

General Item

Exploring the Human-Dog Relationship with Courtney Sexton

Exploring the Human-Dog Relationship with Courtney SextonCourtney Sexton joined Virginia Tech’s “Curious Conversations” podcast to talk about the unique relationship between humans and dogs.

Date: Apr 30, 2024 - -

General Item

The Chemistry of Earth History with Ben Gill

The Chemistry of Earth History with Ben GillBen Gill joined Virginia Tech’s “Curious Conversations” to chat about piecing together Earth history through a combination of geology and chemistry.

Date: Apr 23, 2024 - -

General Item

Circular Economies with Jennifer Russell

Circular Economies with Jennifer RussellJennifer Russell joined Virginia Tech’s “Curious Conversations” podcast to talk about the concept of a circular economy.

Date: Apr 16, 2024 - -

General Item

The History of Virginia Tech's Helmet Lab with Stefan Duma

The History of Virginia Tech's Helmet Lab with Stefan DumaIn this Curious Conversations episode, Stefan Duma recounts the history of Virginia Tech’s Helmet Lab and its impact on head-injury research and safety.

Date: Apr 09, 2024 - -

General Item

The History of Food Waste with Anna Zeide

The History of Food Waste with Anna ZeideAnna Zeide joined Virginia Tech’s “Curious Conversations” to talk about the history of food waste in America and its impact on society and the environment.

Date: Apr 02, 2024 - -

General Item

The Dog Aging Project with Audrey Ruple

The Dog Aging Project with Audrey RupleIn this episode Audrey Ruple discusses the Dog Aging Project, exploring canine aging, health patterns, and what dogs can teach us about longevity.

Date: Mar 26, 2024 - -

General Item

All About Air Pollution with Gabriel Isaacman-VanWertz

All About Air Pollution with Gabriel Isaacman-VanWertzGabriel Isaacman-VanWertz joined Virginia Tech’s “Curious Conversations” to talk about air pollution and its misconceptions.

Date: Mar 19, 2024 - -

General Item

Righting a Wrong Understanding of Newton's Law with Daniel Hoek

Righting a Wrong Understanding of Newton's Law with Daniel HoekDaniel Hoek joined Virginia Tech’s “Curious Conversations” to talk about the recent discovery he made related to Newton's first law of motion.

Date: Mar 11, 2024 - -

General Item

Measuring the Risks of Sinking Land with Manoochehr Shirzaei

Measuring the Risks of Sinking Land with Manoochehr ShirzaeiManoochehr Shirzaei discusses land subsidence, its role in climate change, and how satellite data creates maps to guide local decisions.

Date: Mar 05, 2024 - -

General Item

Emerging Technology and Tourism with Zheng "Phil" Xiang

Emerging Technology and Tourism with Zheng "Phil" XiangZheng "Phil" Xiang joins the "Curious Conversations" podcast to talk about the intersection of technology and tourism.

Date: Feb 27, 2024 - -

General Item

AI and Education with Andrew Katz

AI and Education with Andrew KatzAndrew Katz explores how artificial intelligence could transform education, impacting teaching, feedback, and learning in this episode.

Date: Feb 20, 2024 - -

General Item

Warm, Fuzzy Feelings and Relationships with Rose Wesche

Warm, Fuzzy Feelings and Relationships with Rose WescheIn this Curious Conversations episode, Rose Wesche explores warm-fuzzy feelings and the science of relationships, from attachment to emotional connection.

Date: Feb 13, 2024 - -

General Item

The Future of Wireless Networks with Luiz DaSilva

The Future of Wireless Networks with Luiz DaSilvaIn this episode, Luiz DaSilva talks about wireless networks and Commonwealth Cyber Initiative's test beds.

Date: Feb 06, 2024 - -

General Item

The Positive Impacts of Bird Feeding with Ashley Dayer

The Positive Impacts of Bird Feeding with Ashley DayerAshley Dayer explores how bird feeding benefits human well-being and shares insights from a new project at the intersection of birds and people.

Date: Jan 30, 2024 - -

General Item

Sticking to Healthy Changes with Samantha Harden

Sticking to Healthy Changes with Samantha HardenSamantha Harden joined Virginia Tech’s “Curious Conversations” to chat about the science behind developing and keeping healthy habits.

Date: Jan 16, 2024 -

-

General Item

Screen Time and Young Children with Koeun Choi

Screen Time and Young Children with Koeun ChoiIn this episode, Koeun Choi discusses how media affects young children and shares a project using AI to support early reading development.

Date: Dec 11, 2023 - -

General Item

The History of Holiday Foods with Anna Zeide

The History of Holiday Foods with Anna ZeideAnna Zeide explores the history of winter holiday foods and how personal traditions surrounding them are created and evolve over time.

Date: Dec 04, 2023 - -

General Item

The Chemistry of Better Batteries with Feng Lin

The Chemistry of Better Batteries with Feng LinFeng Lin explains the chemistry of electric vehicle batteries, current production challenges, and how coal might contribute to future solutions.

Date: Nov 27, 2023 - -

General Item

AI as a Personal Assistant with Ismini Lourentzou

AI as a Personal Assistant with Ismini LourentzouIn this episode, Ismini Lourentzou discusses AI, personal assistants, and her student team’s experience in the Alexa Prize TaskBot Challenge 2.

Date: Nov 20, 2023 - -

General Item

The Power of International Collaborations with Roop Mahajan

The Power of International Collaborations with Roop MahajanRoop Mahajan discusses how international collaborations have advanced his graphene research their broader importance to innovation and scientific progress.

Date: Nov 13, 2023 - -

General Item

Driving around Heavy Trucks with Matt Camden and Scott Tidwell

Driving around Heavy Trucks with Matt Camden and Scott TidwellMatt Camden and Scott Tidwell discuss VTTI’s Sharing the Road program and share practical safety tips for drivers of all ages.

Date: Nov 06, 2023 - -

General Item

Autonomous Technology and Mining with Erik Westman

Autonomous Technology and Mining with Erik WestmanErik Westman explores how machine learning and autonomous tech are reshaping mining - and how Virginia Tech prepares students.

Date: Oct 30, 2023 - -

General Item

Agriculture Technology and Farmers with Maaz Gardezi

Agriculture Technology and Farmers with Maaz GardeziIn this episode, Maaz Gardezi discusses the importance of developing agricultural technology in collaboration with farmers and incorporating their input.

Date: Oct 23, 2023 - -

General Item

AI and Healthcare Workspaces with Sarah Henrickson Parker

AI and Healthcare Workspaces with Sarah Henrickson ParkerSarah Henrickson Parker discusses how AI and machine learning is currently used in some healthcare spaces, and what the potential is for the future.

Date: Oct 16, 2023 - -

General Item

AI and Online Threats with Bimal Viswanath

AI and Online Threats with Bimal ViswanathIn this episode, Bimal Viswanath discusses how the rise of artificial intelligence and large language models has changed the online threat landscape.

Date: Oct 09, 2023 - -

General Item

AI and the Workforce with Cayce Myers

AI and the Workforce with Cayce MyersIn this episode, Cayce Myers fields questions on artificial intelligence’s impact on the workforce, regulations, copyright law, and more.

Date: Oct 02, 2023 - -

General Item

Special Edition: The GAP Report with Tom Thompson and Jessica Agnew

Special Edition: The GAP Report with Tom Thompson and Jessica AgnewTom and Jessica from the GAP Report joined the podcast just prior to its 2023 release to explain what it is and how they hope it's used.

Date: Oct 01, 2023 - -

General Item

The Metaverse, Digital Twins, and Green AI with Walid Saad

The Metaverse, Digital Twins, and Green AI with Walid SaadIn this episode Walid Saad fields questions about the metaverse, digital twins, and artificial intelligence’s potential impact on the environment.

Date: Sep 24, 2023 - -

General Item

Semiconductors, Packaging, and more with Christina Dimarino

Semiconductors, Packaging, and more with Christina DimarinoChristina Dimarino discusses semiconductors, packaging in onshoring their production, and Virginia Tech's efforts for workforce development in this field.

Date: Sep 15, 2023 - -

General Item

Pilot: Electric Vehicles with Hesham Rakha

Pilot: Electric Vehicles with Hesham RakhaIn this pilot episode, Hesham Rakha shares insights on what sustainable mobility means and some of his personal experiences with an electric car.

Date: Aug 14, 2023 -

Podcast Host

About the Podcast

"Curious Conversations" is a series of free-flowing conversations with Virginia Tech researchers that take place at the intersection of world-class research and everyday life.

Produced and hosted by Virginia Tech writer and editor Travis Williams, university researchers share their expertise and motivations as well as the practical applications of their work in a format that more closely resembles chats at a cookout than classroom lectures. New episodes are shared each Tuesday.

If you know of an expert (or are that expert) who’d make for a great conversation, email Travis today.